Card Game To Improve Critical Thinking

Julia Gressick, Ph.D.

Joel B. Langston

Author Note

Julia Gressick PhD is an Associate Professor of Instructional Technology at Indiana University South Bend. Joel B. Langston is a graduate student of Liberal Studies and Manager of Media Services at Indiana University South Bend. Communication regarding this manuscript should be directed to Julia Gressick at: Department of Teacher Education, Indiana University South Bend, School of Education, 1700 Mishawaka Ave., Post Office Box 7111, South Bend, IN 46634-7111. Phone: (574) 520-4281, Fax: (574) 520-4550,Email: jgressic@iusb.edu.

Abstract

Fostering critical thinking skills is a ubiquitous goal across disciplines and social contexts. Productive solutions to educational, content-based and social problems can emerge through well-reasoned conversation. How best to support the development of these skills has been a topic of debate. In this study, we investigated the design and effectiveness of a card-based game focused on undergraduate student understanding of common fallacies in thinking. 13 Fallacies was designed with the intention of improving students’ reasoning. In our study, we completed an iterative design phase, play testing phase and have collected data on student learning outcomes from two semesters as a result of classroom implementation. Results indicate that 13 Fallacies improved student understanding of common fallacies in thinking and promoted social reasoning for at-risk undergraduate students.

Show us your hand: How a card game improved critical thinking for at-risk college students

A common goal of instructors across disciplines in higher education is to foster undergraduates’ critical thinking and argumentative reasoning skills (e.g. Gellin, 2003). Many agree it is important for students to understand errors in thinking, recognize persuasion, and distinguish among opinion, reasoned judgments and facts (Halpern, 2003). These skills are essential for success across domains. Further, their importance holds the power to transform lives through productive social communication and engagement. Moreover, critical thinking skills consistently rank among top competencies that employers seek in post-secondary degree-holding candidates (Casner-Lotto & Barrington, 2006). How best to cultivate and support development of these skills, however, has been a topic of debate (e.g. Cavagnetto, 2010). Despite our natural human tendencies to argue from a young age (Hay & Ross, 1982), there are many errors we commit in our daily thinking. For example, premises might be unacceptable, based solely on opinion and unrelated or inconsistent with conclusions (Kuhn, 2010). Experts who are cited may not be credible and important information might be missing from students’ arguments. Recognizing errors in our thinking can be a challenge since they often seem persuasive and resemble sound reasoning despite their unsound nature (Toulmin, Rieke & Janik, 1984). Whether committing these fallacies is intentional with the goal of persuasion or simply an oversight, it is important for undergraduate students to understand fallacies in order to defend against them while improving the strength of the arguments they advance.

Gaming as a way of learning and reviewing content has become an increasingly popular way to engage students (Swanson, 2014). Leveraging the ubiquitous human affinity across cultures for engaging in play, some games go beyond domain knowledge (e.g. Squire & Jan, 2007) to focus on more general skills, like argumentation. While digital games have become increasingly popular, card-based games help to bridge the digital divide and provide access to learning where technological resources might not be readily available (Bochennek, Wittekindt, Zimmermann & Klingebiel, 2007). Card games as pedagogy also foster collaborative learning and essential 21st Century habits of mind, including critical thinking and productive discourse skills (Reese & Wells, 2007).

Considering broad critical thinking goals of higher education and the recognized benefits of pedagogical games, we have designed and implemented a card game called 13 Fallacies in a course that prepares diverse, incoming, students who are identified as having barriers for college success. This game is aimed at scaffolding students’ recognition of fallacies in others’ thinking and avoiding them in their own social negotiation. Our research was guided by the following research questions: 1. Does playing 13 Fallacies promote students’ understanding of common fallacies in thinking, as evidenced by performance on a written assessment? 2. Does a guided video activity followed by playing 13 Fallacies improve students’ understanding of common fallacies in thinking when compared to playing 13 Fallacies alone? 3. Do students feel positively about 13 Fallacies as a learning tool, as evidenced by self-reported survey responses?

Theoretical framework

We have framed the development of 13 Fallacies in Kuh’s (2008) high impact practices, which are beneficial for diverse college students’ learning outcomes, affect and overall development. 13 Fallacies connects to Kuh’s high impact practices since it is a collaborative learning approach designed to improve undergraduates’ critical thinking skills while building a community of learners.

Further guiding this research are theories of argumentative reasoning (Toulmin, 1958). Toulmin, Rieke & Janik (1984) assert that recognizing fallacies in thinking is an important component of reasoning and constructing sound arguments. Framed as “a kind of sensitivity training”, Toulmin, Rieke & Janik define the distinction between recognizing others’ errors as an important component of avoiding them in one’s own thinking. In 13 Fallacies students are expected to both advance and defend arguments made about common fallacies.

This research is also guided by the potential cognitive and motivational benefits of engaging in game play (Gee, 2003). Games can promote risk taking in a non-threatening manner because failure is offloaded to the process of play and becomes less associated with the individual. Further, the choices afforded by gameplay promote student agency in decision making, which may lead to increased engagement and motivation.

We describe 13 Fallacies as a “lightly-contextualized” learning experience. The content of the game was written considering the concept of general problem solving skills (Perkins & Salomon, 1989). When the focus of learning is the development of a set of skills, in this case fallacy recognition as a means to improve critical thinking skills, providing a generalizable context can benefit and assist transfer of skills to other domains. Since 13 Fallacies was played in a course for incoming freshmen designed to cultivate skills essential to their success in future classes, we carefully considered how to provide meaningful context without overly situating the skills within a specific domain. In turn, this also prepares students for future learning (Bransford & Schwartz, 1999) and success in other courses. Because of this consideration, 13 Fallacies can be adopted to various contexts to teach and learn about fallacies in thinking.

Methodology

This paper reports on the development of 13 Fallacies and two iterations of data collection. To develop the game, we used a design-based research approach (Brown, 1992; Collins, 1990). Using this approach allowed us to produce an instructional intervention and systematically examine resulting student learning in a classroom environment. We have separated iterations of development into two phases: initial development and classroom implementation. Initial development involved the design and play testing of 13 Fallacies. The classroom implementation phase focused on the wide-scale introduction of the game that resulted from the initial development phase and its influence on student learning outcomes in two conditions. We collected data during Fall 2015 and 2016 semesters. During each semester, 13 Fallacies was played 10 times over the course of five weeks. Each session lasted 30 minutes. We have obtained human subjects approval for all research reported in this paper.

After iteratively designing and play testing 13 Fallacies, for our first study, we administered isomorphic pre- and post-assessments in initial data collection to measure the effectiveness of the game. Assessments measured students’ ability to identify common fallacies covered in the game. Before the first session students completed a pre-assessment and a post-assessment was administered on the last day of the semester.

Our second study in Fall 2016 used an in vivo experimental design. In vivo experimental design refers to research that involves manipulating smaller elements of instruction and determining the effect of these manipulations on student learning (e.g. Aleven & Koedinger 2002; Koedinger, Aleven, Roll & Baker, 2009; Salden & Aleven, 2009; Salden & Koedinger, 2009). This approach differs from traditional experimental design because the study is situated in an authentic learning setting, in this case a college course, rather than in a lab setting. While similar to design-based research (Brown & Campione, 1996; Collins, 1990; The Design-Based Research Collective, 2003), the primary distinction is the inclusion of a comparison group and a single iteration of instruction.

Data sources

The context of this research was an academic skills course for diverse groups of incoming freshmen college students at a Midwestern regional state university campus. The course, U100, focuses on the development of essential academic and critical thinking skills to prepare students for future college courses and promote retention. An essential component of this goal is to provide rigorous, relevant and relation-centered experiences for students. Enrolled in this course are the University’s most at-risk students who are conditionally admitted because they do not meet minimum admission requirements. They enter college with SAT scores as low as the mid-700’s and possess minimal skills to navigate higher education.

In Fall 2015 all students played 13 Fallacies after watching a brief video explaining the rules. In Fall 2016, we were interested in analyzing the impact of a supplemental instructional video activity on student learning. Students watched a video about fallacies in thinking and completed a worksheet. To analyze the effectiveness of this approach, some U100 sections used the video and worksheet and others did not. We administered pre- and post-assessments across these conditions to determine whether the video supplement improved students’ learning outcomes when compared to gameplay alone. 72 students completed pre- and post-tests in Fall 2015 and 77 students completed pre- and post-tests in Fall 2016.

Description of 13 Fallacies

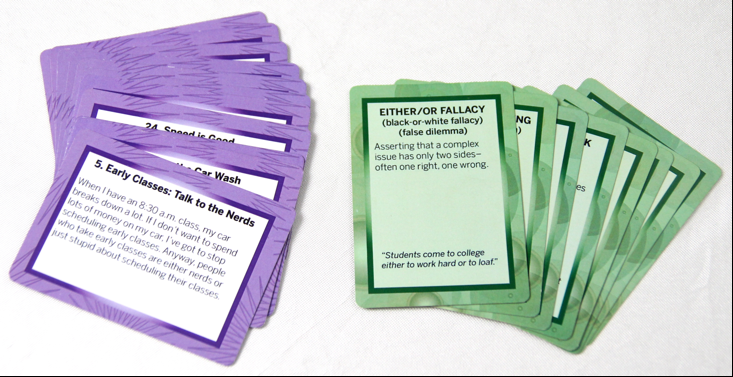

13 Fallacies is played in small groups and designed around a mechanic similar to Apples to Apples. In a round of play, each player draws 5 fallacy cards that provide a definition and example of a specific common fallacy in thinking (e.g. card stacking, appeal to pity). One player’s role is the judge, and this role rotates among all players to comprise a round of play. The player in the judge role reveals a “scenario” card that provides a situation that contains at least three fallacies (Authors, 2015). Figure 1 illustrates fallacy and scenario cards. The scenarios are framed as relevant civic instances that relate to the experience of undergraduate students at a regional state campus. For example: There should not be an attendance policy for college classes. Students are either trusted to show up to class, or they are treated like grade school kids. Just look at all that college students have accomplished. Be a teacher who really cares about your students and drop the attendance policy.

Figure 1. Fallacy and scenario cards from 13 Fallacies.

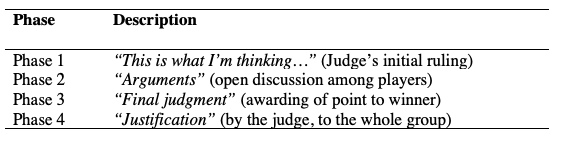

The game is organized into four phases, described in Table 1. Each player other than the judge plays a fallacy card and then justifies why theirs is the best description of the scenario. The player selected by the judge receives a token signifying the best response, and the player with the most tokens at the end of the game is the winner.

Table 1. Phases of Play in 13 Fallacies.

Results and discussion

Designing 13 Fallacies

To design 13 Fallacies, we began with a merger of academic goals, theories of learning, and game design mechanics (Schell, 2008). After developing a prototype, we play tested the game through three iterations. During each play test we collected field notes, then conducted follow-up discussions with participants. After our first test, we identified areas that needed improvement. They included the number of cards each player would have in their hand and a mechanism for students to confirm whether the judge’s ruling was accurate for a specific scenario. As a result we modified the game’s rules to include players having five cards rather than 12; we also provided a key for the instructors in order to check the accuracy of the judge’s ruling. Evidence from field notes and player interviews supported these improvements. Another concern that arose was how to scaffold the social negotiation that occurs once all of the cards are played. After our second play test, we developed four phases of the game, described above in Table 1. During a third play test we observed that both mechanism and approach were engaging students and we proceeded to produce the game for wide-scale implementation.

Study 1: 13 Fallacies promotes understanding

During the Fall 2015 semester, all students enrolled in U100 played 13 Fallacies under the same conditions. Our quantitative analysis of student’s pre and post-test data showed that student’s ability to identify common fallacies improved after playing 13 Fallacies. The average pre-test score was 28% (SD = 14.83). This suggests that students’ initial knowledge of common fallacies prior to gameplay was limited. The post-test score average was 70.25% (SD = 12.75). The average individual gain between pre- and post-test was 36.92% (SD = 16.08). The gain in student scores was statistically significant, t(71) = 19.509, p<.001, which indicates that 13 Fallacies helped students learn to identify common fallacies in thinking.

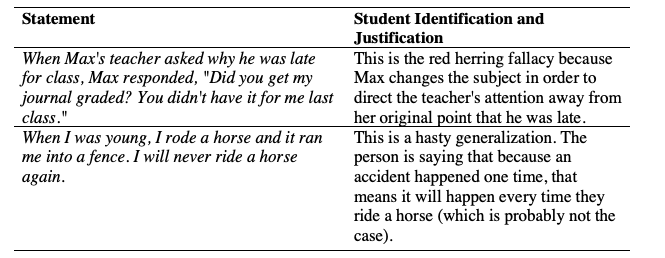

Anecdotal analyses of student writing following the post-test indicated that students were able to not only identify common fallacies in others’ thinking, but to provide written justifications of their own reasoning. This is illustrated by the examples in Table 2.

Table 2. Student Justifications of Fallacy Identifications.

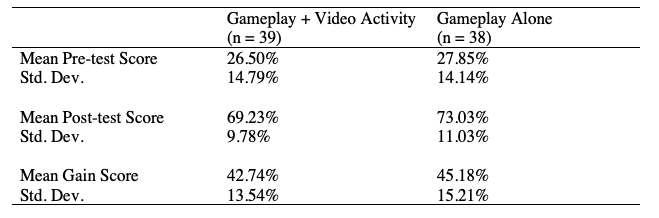

Study 2: direct instruction does not enhance learning

In a second study we were interested in analyzing the role of an instructional video activity as a supplement to U100 students’ play of 13 Fallacies. In Fall 2016 some students viewed an instructional video about fallacies that was supplemented with a worksheet. To analyze the effectiveness of this approach, some U100 instructors used the worksheet with the video and others did not. We conducted pre and post-test analyses across these two conditions. Students who were in classes that used the worksheet and video as a supplement to gameplay (n = 39) had an average pre-test score of 26.50% (SD = 14.79) and post-test score of 69.23% (SD = 9.78). The mean individual gain in performance was 42.74% (SD = 13.54). Individuals in the comparison group, which did not complete the worksheet video activity (n = 38), had an average pre-test score of 27.85% (SD = 14.14) and post-test score of 73.03% (SD = 11.03). The mean individual gain in performance was 45.18% (SD = 15.21). These results indicate that mean student learning gains were approximately the same across conditions. There was no significant difference between the gain scores of the two groups, t(75) = -.744, p = .2295. This suggests that both approaches to instruction helped students to learn. Results suggest there was no added benefit to direct instruction that used the worksheet and video.

Table 3. Mean Assessment Scores Across Conditions

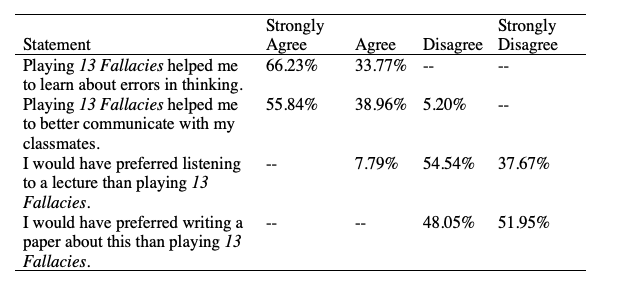

Student perceptions of 13 Fallacies

We were also interested in student perceptions of 13 Fallacies as a learning tool, as compared to more traditional instructional approaches. To measure this, students completed a brief survey at the end of their post-test in Fall 2016. Students were asked to respond whether they strongly agree (4), agree (3), disagree (2) or strongly disagree (1) with the following statements: 1. Playing 13 Fallacies helped me to learn about errors in thinking. 2. Playing 13 Fallacies helped me to better communicate with my classmates. 3. I would have preferred listening to a lecture than playing 13 Fallacies. 4. I would have preferred writing a paper about this than playing 13 Fallacies.

Table 4 reports the percentages of students who indicated each rating for these statements. Across conditions, most students indicated they felt 13 Fallacies helped them to learn and promoted collaboration with classmates. Furthermore, the majority of U100 students indicated that they preferred the game-based approach to learning when compared to listening to a lecture or writing a paper on the topic of fallacies in thinking.

Table 4. Student Perceptions of 13 Fallacies.

Along with rating their agreement with these statements, students were provided with an opportunity to provide additional written comments about the game. 14 students supplied additional feedback and the comments indicated that students enjoyed playing the game (e.g. “the game is actually really helpful”) or offered suggestions for how the implementation might be improved (e.g. “I wish we got more time to play the game”).

Conclusion

Playing 13 Fallacies improved students’ understanding of common fallacies in thinking for conditionally-admitted incoming freshmen attending a regional state university. In a second study, where we compared gameplay alone with a supplemental video and worksheet activity, we found no significant difference in student learning outcomes between these conditions. This suggests that students did not benefit from the additional instructional activity and that learning occurred through the process of play. Students self-reported that they felt the game helped them to learn about fallacies and to better communicate with classmates. Students also indicated that they preferred gameplay over listening to a lecture or writing a paper about the topic.

This research contributes to a larger discussion on how best to increase the quality of undergraduates’ critical thinking and argumentation skills by focusing on identifying common fallacies in self, others and cultural beliefs. Through adopting a ‘lightly-contextualized’ approach, students are provided with opportunities to actively engage with their peers while analyzing their own and others’ thinking as a means to develop habits of mind necessary for success in college. Further, since our research targeted a group of diverse, conditionally-admitted, at-risk undergraduate students, our goal was to provide scaffolding for the skills of productive argumentation that are often nuanced or bound to explicit contexts, hidden in a way that prevents abstraction and transfer to new domains (Perkins & Salomon, 1989). Through our design, we make explicit the common errors in thinking and provide opportunity that promises to promote enduring understanding, prepare students for future learning, and create a more equitable learning experience for students who might have limited development and prior knowledge of these skills. 13 Fallacies has the potential to be adapted to various contexts as a way to promote students’ critical thinking, argumentation skills and general communication.

References

Aleven, V. and Koedinger, K.R. (2002). An effective metacognitive strategy: Learning by doing and explaining with a computer-based Cognitive Tutor. Cognitive Science, 26(2).

Bochennek, K., Wittekindt, B., Zimmermann, S.Y. and Klingebiel, T. (2007). More Than Mere Games: A Review of Card and Board Games for Medical Education. Medical Teacher 29(9), 941-948.

Bransford, J.D. and Schwartz, D.L. (1999). Rethinking transfer: A simple proposal with multiple implications. Review of Research in Education 24: 61-101.

Brown, A.L. (1992). Design experiments: Theoretical and methodological challenges in creating complex interventions in classroom settings. The Journal of the Learning Sciences 2(2): 141-178.

Brown, A.L. and Campione, J.C. (1996). Psychological theory and the design of innovative learning environments. In Schauble, L. and Glaser, R. (eds) Innovations in learning: New environments for education. Hillsdale, NJ: Lawrence Erlbaum Associates.

Cavagnetto, A.R. (2010). Argument to foster scientific literacy: A review of argument interventions in K-12 science contexts. Review of Educational Research 80(3): 336-371.

Casner-Lotto, J. and Barrington, L. (2006). Are They Really Ready to Work? Employers’ Perspectives on the Basic Knowledge and Applied Skills of New Entrants to the 21st Century U.S. Workforce. Report by Partnership for 21st Century Skills.

Collins, A. (1990). Toward a Design Science of Education. New York, NY: Center for Technology in Education.

Gee, J. (2003). What Video Games Have to Teach Us about Learning and Literacy (2nd ed). Houndmills, Basingstoke, Hampshire, England; Palgrave Macmillan.

Gellin, A. (2003). The effect of undergraduate student involvement on critical thinking: A meta-analysis of the literature 1991-2000. Journal of college student development 44(6): 746-762.

Halpern, D. (2003). Thought and Knowledge (4th ed). Mahwah, NJ: Erlbaum.

Hay, D.F. and Ross, H.S. (1982). The social nature of early conflict. Child Development 53: 105-113.

Koedinger, K.R., Aleven, V., Roll, I. and Baker, R. (2009). In vivo experiments on whether supporting metacognition in intelligent tutoring systems yields robust learning. In Hacker, D.J., Dunlosky, J. and Graesser, A.C. (eds) Handbook of Metacognition in Education, The Educational Psychology Series. New York: Routledge: pp.897-964.

Kuh, G.D. (2008). High-impact educational practices: What they are, who has access to them, and why they matter. Washington, DC: AACU.

Kuhn, D. (2010). Teaching and learning science as argument. Science Education: 810-824.

[Authors, 2015, omitted for peer review]

Perkins, D.N. and & Salomon, G. (1989). Are cognitive skills context bound? Educational Researcher 18 (1): 16-25.

Reese, C. and Wells, T. (2007). Teaching Academic Discussion Skills With a Card Game. Simulation & Gaming 38(4): 546-555.

Salden, R.J.C.M. and Aleven, V. (2009). In vivo experimentation on self-explanations across domains. Symposium at the Thirteenth Biennial Conference of the European Association for Research on Learning and Instruction.

Salden, R.J.C.M. and Koedinger, K.R. (2009). In vivo experimentation on worked examples across domains. Symposium at the Thirteenth Biennial Conference of the European Association for Research on Learning and Instruction.

Schell, J. (2014). The Art of Game Design: A book of lenses. Boston, MA: Elsevier.

Squire, K.D. and Jan, M. (2007). Mad City Mystery: Developing Scientific Argumentation Skills With a Place-based Augmented Reality Game on Handheld Computers. Journal of Science Education and Technology 16(1): 5-29.

Swanson, K. (2014). Digital games and learning: A world of opportunities. Tech & Learning 34(6): 22.

The Design-Based Research Collective (2003). Design-based research: An emerging paradigm for educational inquiry. Educational Researcher 32(1): 5-8.

Toulmin, S. (1958). The Uses of Argument. Cambridge: Cambridge University Press.

Toulmin, S., Rieke, R. and Janik, A. (1984). An introduction of reasoning. New York: Macmillan.

This work is licensed under a Creative Commons Attribution 4.0 International License.